[Related: ‘Should’ considered harmful, which ought to win some sort of award for the blog post that repulsed me when I first read it and then I kept mentally poking at it until I realized it was actually deeply insightful and totally right.]

[Content warning: fitspo to criticize it, effective altruism.]

A lot of people seem to view morality as this sort of grim obligation we are forced to discharge in order to get on with the business of living our lives. This position is perhaps most eloquently laid out in Nobody Is Perfect, Everything Is Commensurable, the thesis of which is that if you feel anxious and self-loathing unless you have done enough good, we have arbitrarily decided that “enough good” is equivalent to “donating ten percent of your income”, and if you have done that then you’re done now.

But to be honest, whenever I find myself reading blog posts like that, I find myself going: why?

I mean, I know that we keep doing this shit. I spend so much time feeling anxious, self-loathing, and ashamed because I’m a bad person that sometimes it seems like my most fundamental personality trait. I have spent approximately half my life low-key hating myself for falling down under the crushing burden of moral obligation.

But saying “if you donate ten percent of your income, then you don’t have to feel the grim burden of moral obligation anymore” doesn’t actually help me. It’s just playing Grim-Burden-Of-Moral-Obligation Whack-A-Mole. I’m donating ten percent, but I’ve also spent my entire life unemployed or underemployed, and so now I’m going to feel crippling anxiety and self-loathing about that. Wait, it’s because I’m mentally ill, so that’s probably okay– except that now I have to feel crippling anxiety and self-loathing about how slowly I’m recovering and whether I’m working hard enough on getting better. And if by some miracle I managed to get a well-paid job, I’d be freaking out all the time that I had managed to self-sabotage getting a promotion and wouldn’t be able to make a donation.

Even worse, I’ve found it creates this nasty crabs-in-a-bucket tendency in me. Instead of admiring people who are better than me, I find myself hating them. Every time I see someone who’s a vegan, or who’s donating twenty percent of their income, or who donates blood regularly, it’s not just them being good people, it’s another thing I need to do before I get to stop hating myself. This emotion is not just personally unpleasant but has bad consequences: I do not want to contribute to a community where people think “oh, I want to do $GoodThing, but I don’t want to trigger anybody’s self-hate, and I definitely don’t want to be a subject of seething resentment.”

And then recently I asked myself: why am I doing this?

Who put this burden of moral obligation on me? I think morality is a thing human brains do, an adaptation for living in groups, which I turn for my own uses just as I turn the adaptation for recombining DNA into enjoyable afternoons looking at gifs of large-breasted women taking their shirts off. There is nothing out there in the universe that says I have to feel guilty. And other people didn’t do this to me: not only is it true that approximately everyone I interact with wants me to stop hating myself, but if someone said to me “hey, Ozy, I think you should be curled in a ball of guilt and self-loathing” my answer would consist of the words “fuck” and “off”.

In short, the only possible answer to “who did this to me?” is “I did.”

Sometimes it is justified to cause yourself to feel unpleasant things. I am scared shitless of calling people on the phone, but I do it anyway, because some people refuse to use email like civilized people. So maybe there’s some kind of benefit from this crushing burden I have lain on myself.

If I give up the feeling of guilt and moral obligation, will I kill anyone or assault anyone or steal anything? Well, no. I don’t have the slightest desire to commit murder. It would probably make me really unhappy to have murdered someone.

Maybe that’s not a very fair example. After all, I feel no particular temptation to kill anyone; we should talk about something I do feel tempted to do. What about, say, responding to a friend setting a boundary with me by breaking into tears and telling them they don’t love me? That certainly seems very tempting in the moment, but I can’t imagine it’d be particularly good for our friendship. Besides, I’m friends with people because I care about them and want them to be happy; if I make setting boundaries around me dreadfully unpleasant, they’ll never set any boundaries, and then I’ll be hurting them all the time without knowing. So I don’t particularly want to do that either.

What about buying malaria nets for the Against Malaria Foundation? Of course I want to do that! I think it’s sad that people are dying of malaria, and I’d like to help. So I’ll donate, and I’ll work on being able to earn money in the future so I can donate more.

How about eating that delicious cookie that I know has eggs in it? Well, in the moment, it certainly smells wonderful, but I don’t really want chickens to be debeaked either. That sounds like it hurts! Those poor chickens! So nothing but Oreos for me.

What about my relationship with my parents? Well, I spent a whole lot of time feeling like it was my moral duty to continue to have a relationship with them, and it made me really miserable. And then I got angry and set a couple of reasonable boundaries and suddenly my experience of talking to my parents was infinitely more pleasant and, at least on my end, our relationship was much better. So, score one for doing-things-I-want-to-do-based decision-making procedures.

On the other hand, that terrible goddamn Moral Saints essay that makes me rock and shake and sob every time I read it? I don’t give a fuck about whether you think being a moral saint is a good idea, Susan Wolf. Nobody gave you input. I am not a moral saint (yet), but I would take a Moral Saint Pill in a heartbeat, because I want to.

But I don’t have to be a moral saint either! If you make an argument like “oh, from a utilitarian perspective, Ozy, you should at least have a minimum-wage job so you can donate money to charity”… well, I don’t want to? I am lucky enough to be in a privileged position where I can focus on my mental health and my writing, and I’m going to do that, because I want to and I can.

I mean! That is a terrifying thing to type! I am expecting a bunch of people to be like “Ozy, haven’t you noticed you’re a TERRIBLE PERSON? You don’t just get to do things because you WANT TO DO THEM! And if you do do that you don’t get to admit it! If you aren’t going to make yourself miserable, at the very least you need to self-flagellate about how horrible it is that you have class privilege when other people don’t.”

Fortunately, I don’t want to care about the opinion of imaginary people in my head.

And, you know, I think a lot of effective altruists struggling with moral obligation are in the same boat. There’s already a consensus about what you have to do not to feel guilty. You can’t do anything really glaringly evil, like being a Nazi or killing people or robbing banks. You should take care of your family, stay loyal to your friends, and take pride in your work. And around Christmas time, you should drop a couple dollars in the Salvation Army cup.

The fact that effective altruists have moved away from this consensus implies that our crushing sense of moral obligation isn’t doing a hell of a lot. If we didn’t want to be effective altruists, we wouldn’t be effective altruists. Most people aren’t. I mean, check it inside your own head: if you didn’t feel guilty about the suffering of animals and the global poor, would you still want to do something about it?

And, Christ, now that I’m thinking about it, it seems like there are crushing senses of moral obligation everywhere.

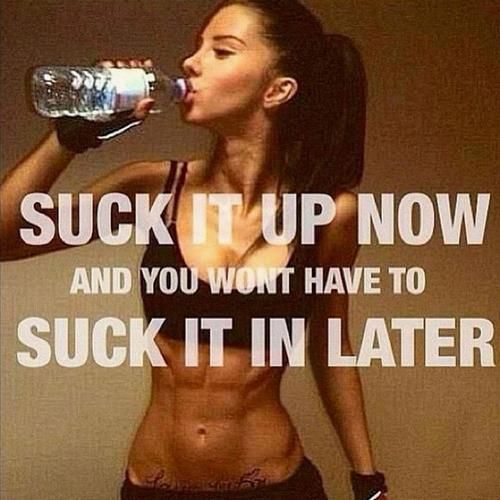

There’s a whole genre of fitspo like this:

Which implies that exercise is a boring, painful duty that we struggle through in order to prevent the horrible fate of being fat.

But… I actually like exercising? The ache in my muscles feels nice! I feel great afterward! I can do handstands against a wall, and maybe someday I will be able to walk around on my hands like Ty Lee! Exercise isn’t always enjoyable– sometimes the last thing I want to do is get off the couch and do a pushup– but all things considered I want to exercise. And in my experience, weightlifters love making their numbers go up, runners are passionate about the runner’s high, people who play sports appreciate the competition and camaraderie, and yogis won’t shut up about how everyone needs to do yoga. This whole “exercise is a grim horrible duty!” business just seems like it makes it harder for people to figure out what kind of exercise they enjoy, and therefore to feel motivated to actually exercise. (And in the event that you don’t enjoy any exercise, even going on a walk while listening to an audiobook, it seems to me that you have much better things to do with your life.)

Similarly, there are a whole bunch of traditionalists who won’t shut up about how marriage comes with certain obligations, and it’s a commitment, and it is your duty to do this, that, and the other thing. While one could disagree about their idea of what obligations marriage imposes on the spouses– and I do– it is striking to read essays about marriage that seem to completely miss the idea that spouses might love each other, and want to build their lives together, and choose to stay together and work on the relationship even when they are facing conflict or wish they’d chosen someone else, because fundamentally, on a very basic level, they want to be married to each other. And the thing is… all the couples I’ve met that were actually happily married don’t base their relationships on obligation or on self-sacrifice; they base it on the understanding that, no matter what happens, they are on Team Us.

Guys. It’s okay. Nobody is putting these yokes of moral obligation on us. No one is making us be skinny, or stay married, or give money to charity, or talk to your parents, or anything. We don’t have to hate ourselves or feel guilty or ashamed or anxious. It’s all made up.

If it’s something that actually matters to you– something that you genuinely care about– you’ll still do it, because it matters to you and you care about it. You don’t actually have to worry about not doing things you want to do. You might do less stupid shit that you don’t care about, but why are you doing stupid shit you don’t care about in the first place?

If you’re crushed under the burden of moral obligation, you can put it down.

Oreos are vegan? Wicked!

LikeLike

They dip great in almond milk.

LikeLike

How do you feel about cookies made with eggs from humanely treated chickens?

Meanwhile, I’m thinking about self-hatred examined on the specific details of the self-hatred vs. self-hatred as a symptom of a traumatized nervous system. I’m guessing that the nervous system needs to be calmed enough so that it’s possible to look at whether the self-hatred makes sense, and then logic can be helpful.

LikeLike

“Cage-free” or “free-range” chickens mean only what they literally say: no cages, an open door which the chickens may or may not walk outside into a yard that may not include any grass. They’re still typically debeaked, overcrowded, and generally quite miserable.

LikeLiked by 2 people

What about eggs from chickens kept in someone’s backyard?

LikeLike

Those I would eat.

LikeLiked by 1 person

This is an uncharacteristically dense post, Ozy. I mean, you’ve just written a long series of posts with the theme “Your brain lies to you” and then you turn around and say,

“If it’s something that actually matters to you– something that you genuinely care about– you’ll still do it, because it matters to you and you care about it.”

No, you won’t still do it. Not all the time.

I like and need strongly-worded inspiration to work out. But I don’t see that “suck it up now” as a moral obligation. I’ve set a goal for myself, a hard goal, a worthwhile goal, it’s a goal I want to achieve. But I know from experience that there will be days when I don’t want to do the necessary work. There are days when I go to the gym and I have a nagging injury that is annoying but doesn’t prevent me from doing that day’s training. There are days in my relationship when I rationalize excuses for being an asshole to a person I love. On those days, in that moment, my brain is lying to me and saying “You don’t really want to deal with this today” or “what’s the problem with just taking one day off?”. At those moments, I need a kick in the butt.

LikeLiked by 2 people

I mean, if it works for you, it works, and I’m not going to argue with that.

But like… in my experience, I exercise every day, and I have never not once required “feel miserable now or feel miserable about your body later!” as a motivation. In fact, I suspect that would kill my motivation by validating my brain’s (incorrect) feeling that exercise is miserable. What motivates me is “this is fun, you like this, and you will have a good time once you start.”

LikeLike

If there are no obligations, why would I do anything onerous? For instance, if I like meat more than I care about the marginal effect on animal suffering, why should I not eat meat? If I get more out of keeping 10% of my income than I care about the effects on poor strangers, why should I pledge to donate it? I endorse the conclusion, but it seems incompatible with utilitarianism, because this is about how much you care about something, not about how important it is from an impartial perspective.

LikeLiked by 2 people

“How much you care about something” is also a utility function. Mine seems to include a component for the welfare of others.

LikeLike

So does mine. But the post suggests that I shouldn’t do anything beyond the extent to which I care about it. It’s difficult (I say, impossible) to reconcile that with utilitarianism.

LikeLiked by 1 person

We’ve had this discussion. I am a eutilitarian, which is a nonutilitarian who agrees with utilitarians about everything.

LikeLiked by 1 person

Do you think other people should avoid eating eggs/meat/etc, and donate significant amounts to charity? If so, how do you reconcile that with the thesis of this post? If not, then doesn’t much of EA advocacy lose its bite?

LikeLike

I’m too Gryffindor Primary for this shit

LikeLike

No, that is a different meaning of the phrase “utility function”! These notions are really unfortunately named but it is important not to get them mixed up. Let me proceed to copy-paste what I wrote about this last time this came up.

There are two different senses of the word “utility”. The first is decision-theoretic; utility in this sense is a way of encoding an agent’s preferences. Let’s call this utility_1. It’s the thing you are maximizing the expected value of. Any consequentialist agent that satisfies certain assumptions acts according to one; this is Savage’s theorem. (Or the VNM theorem, if you don’t think probability is a notion that needs justifying.)

Then there is “utility” in the sense of utilitarianism, which is some fuzzy measure of well-being that nobody knows how to define. Let’s call this utility_2. “Utilitarianism” means somehow aggregating everyone’s utility_2 functions into a utility_1 function which you then act on. A utility_2 function could potentially also be a utility_1 function but there’s not really any reason to assume this (and utility_1 functions aren’t uniquely defined so that potentially causes some problems).

So, in short: A person with a utility function, in the sense of utility_1, is just a consequentialist, not a utilitarian. A utilitarian is specifically someone who attempts to aggregate people’s utility_2 functions into a utility_1 function, not just someone with a utility_1 function.

LikeLiked by 4 people

Sniffnoy, thank you, that makes a lot of sense. Your definition helps me to retroactively understand confusing conversations I’ve had in the past. 🙂

LikeLike

Sniffnoy, thank you for articulating a concept I think is very important using way less words than I’d be able to.

LikeLike

blacktrance: As an EA enthusiast, ethical subjectivist and Ravenclaw, I’ll take this question 🙂 Other people “should” do those things in the sense that I would prefer them to do those things. Many other people also “should” do those things in the sense that they themselves would prefer to do them. The point of EA advocacy is reaching those in the latter group who either haven’t reflected enough on their own preferences or haven’t realized that their preferences can be satisfied in those ways. From my perspective EA is foremostly a wake up call which says that one should stop going with flow and think seriously about the optimal way to satisfy one’s preferences, addresses to people for whom the lives and well-being of strangers / animals / future sentients are an important part of these preferences.

LikeLike

I should also mention that the best exploration of what exactly constitutes “utility_2” is the essay “What Makes Someone’s Life Go Best?” by Derek Parfit.

LikeLike

It seems to me that the opposite is true – a lot of people feel pressured to signal that they care about the kinds of things EAs care about (because who wants to be the monster who says “I don’t care about starving children in Africa”?) when they actually don’t care and don’t think that they should except to more easily conform to what’s socially desirable.

LikeLike

It seems the solution in that case is for people to work on being able to honestly articulate to themselves what they do and don’t care about, not for EAs to stop helping people bring their behaviors more in line with their values.

LikeLike

sniffnoy, your definition of utility_1 (decision-theoretic utility) isn’t quite right. The von Neumann-Morgernstern theorem is that if an agent satisfies certain assumptions, there is a real-valued function U over outcomes such that the agent’s preferences are isomorphic to maximising the expected value E[U] of the function. (Technically, it’s an infinite class of functions – vNM utility functions are only unique up to positive linear transformations, so U and xU+y are effectively identical.)

They don’t claim that the agent is *actually* trying to maximise E[U], only that “maximise E[U]” and “do what the agent most prefers” always lead to identical choices.

LikeLike

Chrysophylax: Apologies if this is unproductive, but I feel compelled to point out that not only am I aware of what you are saying (indeed, I explicitly noted that utility functions are not unique, and if you look you’ll notice I actually wrote the article on Savage’s Theorem that I linked), but that you are objecting to something I did not actually say. What I wrote was: “It’s the thing you are maximizing the expected value of.” I didn’t write anything about “trying” or internal architecture, I wrote about action. It is a complete description of the agent’s revealed preferences, which need not coincide with the description used internally.

I mean, you’re right that I didn’t explicitly point out that distinction, so thanks for making that clearer for everyone else, I guess. But I’m afraid I must clarify your clarification; you wrote “They don’t claim that the agent is *actually* trying to maximise E[U]” which… well, what does “actually trying” mean? It’s ambiguous. I would say instead that they don’t claim that the agent is in its internal representation trying to maximize E[U], since, taken differently, it is “actually trying” to maximize E[U].

LikeLike

My apologies if I gave offence. I could have phrased that more tactfully and more informatively. I did notice that you wrote the article (which I’ve not yet had time to read) and will probably have questions when I have read it.

You said “It’s the thing you are maximizing the expected value of. Any consequentialist agent that satisfies certain assumptions acts according to one”. This seems to me to suggest that the causal chain is

Evalute U for each possible outcome –> Evaluate all lotteries –> Choose the lottery that maximises E[U]

This is a false description of reality for many types of mind (certainly for humans). I was trying to make clear that U is not necessarily represented in the mind of the agent, but is instead an isomorphic description of the agent’s preferences: whatever rules really generate the agent’s behaviour can be combined to give the function U, which will produce the same oputputs given the same inputs.

When I say that the agent is not claimed to be “actually trying” to maximise E[U], I’m trying to convey that the causal chain producing the agent’s decisions does not necessarily contain any mind-states about maximising utility. The theorem holds for agents that have no concept of utility, as long as they satisfy the assumptions. (Compare with the claim that there are an infinite number of systems equivalent to Peano arithmetic, but that the standard formulation is more real than the others because we actually parse 0 as “zero”, not “symbol representing zero”.)

LikeLike

>turn the adaptation for recombining DNA into enjoyable afternoons looking at gifs of large-breasted women taking their shirts off

You know, I lost my job less than a week ago and am feeling really down on myself. This sounds like an excellent idea.

LikeLike

Are you just… pretending akrasia doesn’t exist? Wanting to have a task completed does not in general make me want to work on the task. You seem to touch on this with the phone call example, but then drop it. For many people, exercising or applying for jobs or going to work or telling the truth or taking their insulin or whatever feels the way phone calls do to you, as a horrible burden that they force themselves to go through because they know (but often have to remind themselves) that the long-term alternative is even worse. Obligation is what this feels like.

LikeLiked by 1 person

[Content note / epistemic status: lack-of-exercise-induced guilt, which I’m not really certain is valid, and maybe the guilt itself proves Ozy’s point on some level]

I’m not going to comment on the post as a whole — it touches on the whole “are all actions selfish?” debate, which I’ve never quite formed a well-developed opinion on — but the gym example particularly interested me. It reminds me of a thought I often had when I was regularly going to the gym: the vast majority of the people there seem incredibly buff already, and are probably exactly those who need to be there the least. There’s an obvious explanation for this: it is these people who are naturally inclined to “love making their numbers go up… appreciate the competition and camaraderie”, etc. Unfortunately, the status quo where people use the gym almost in proportion to how much they want to use it seems to result in a gym-going population largely devoid of those (like me) who could most stand to gain health benefits from working out.

LikeLike

Very interesting post. I also often feel disappointed in myself for not being the perfect utilitarian etc., even though i think i do more than most when it comes to utilitarianism. Once you find your moral philosophy, it’s easy to get wrapped up in it. That said, I think it’s important to always strive to do better

LikeLike

>it is striking to read essays about marriage that seem to completely miss the idea that spouses might love each other, and want to build their lives together, and choose to stay together and work on the relationship even when they are facing conflict or wish they’d chosen someone else, because fundamentally, on a very basic level, they want to be married to each other

I have a theory that this has to due with oppositional sexism. More conservative individuals are probably more o-sexist, and therefore try to avoid cultivating traits associated with the opposite gender within themselves. This results in a lot of conservative men and women who have absolutely nothing in common with each other, except maybe a desire to have sex and children. They have nearly completely different interests, because in order for them to have something in common, one of them would have to diverge from their typical gender roles. For people like this, marriage really would be a horrible chore.

I’m flabbergasted whenever I see a movie from the Fifties where several couples go to a party and the men and women pair off and have conversations in different rooms? Why on Earth would you marry someone if you have so little in common with them that you can’t have a conversation with them at a party? But maybe people had no other choice, in an era where oppositional sexism was stronger.

LikeLike

I agree with the result (people crushed under moral obligations should put them down), but disagree with the reasoning.

Let me first note that a lot of the comments above are from people who find their moral obligations useful instead of overwhelming. Thusly is it said, “Beware Other-Optimising”. Some people should feel stronger moral obligations, others should feel weaker ones, and a correct understanding of morality ought to let us say which are which.

I think the correct answer is that, to be blunt, most people (including myself a few years back) are getting utilitarianism horribly wrong. *They’re treating it like virtue ethics.* When you write posts about utilitarianism, Ozy, it comes across very strongly that you’re thinking about what you need to do to be a good utilitarian, not what would maximise total utility. It’s still *personal*, and that’s where it goes wrong.

The proper use of praise and blame is the proper use of everything else – maximising utility. Feelings of virtue and guilt are a *tool* that should be used to motivate people to do utility-increasing things. In the limit, virtue ethics and utilitarianism converge, because the correct definition of a virtuous person is “a person who maximises global utility”; but for people who aren’t logically omniscient, virtue ethics is fundamentally misguided. It’s a finger pointing at the moon. The goal is not to point very accurately, nor to make tremendous efforts to reach the moon: the point is to *get as close as possible to the moon*, by any and all means available.

The right question is not “what would a perfect utilitarian do in this situation?” but “what should *I* do in this situation to maximise utility?”. Look back at your post about Hardcore and Softcore EAs, where you got the answer right. The things that utilitarianism obliges Dustin Moskovitz to do are not the same as the things it obliges Ozy to do because Dustin is capable of being vastly more effective. Similarly, it obliges a superintelligence to do very much more than it obliges Dustin to do, because Dustin cannot solve the protein-folding problem or invent uploading.

Your argument takes a wrong turning when you talk about Moral Saints. Real!Ozy is not obliged to act like MS!Ozy because real!Ozy has never taken a Moral Saint pill. Real!Ozy has to deal with things like akrasia, and maladaptive feelings of moral guilt, and valuing things other than maximising global utility. Brains are real, minds are made by brains, and demanding that you do something your brain won’t allow is demanding that the laws of physics stop applying to you. Feeling guilty about not being Dustin Moskovitz is no more sane than feeling guilty about not being God. (Wanting to hurry up and become God is entirely sensible.)

MS!Ozy is a *different person* solving a constrained optimisation problem (maximise global utility) with *different constraints*; obviously the optimal points are different. Trying to act like you’re perfect when you aren’t leads to burnout, crippling guilt and other forms of resource misallocation – you not only fail to be perfect, you fail to be the best that you can be.

Utilitarianism obliges you, with the full and heavy weight of Law written in letters of flame a mile tall, to do the best that *you* can do – not the best that somebody else could do in your place.

LikeLiked by 1 person

Great post. I really enjoyed reading it and will need some time to think on it, for sure!

LikeLike

I fully endorse this view and have been advocating for something similar for years. I know many people who feel crushed under the weight of (moral and quasi-moral) obligation, and I am very happy to see someone I respect endorsing this view.

LikeLike

Pingback: What I’ve Been Reading: January 10, 2016 | Refrigerator Rants

Pingback: Atheists With A God-Shaped Hole | Thing of Things