[epistemic status: HANDWAVE HANDWAVE HANDWAVE]

When one is a utilitarian, inevitably the question comes up of what this “utility” thing we’re maximizing is. For many of my friends, the answer is preference satisfaction: the moral action is the action that satisfies the strongest preferences of the most people, usually giving precedence to more meta-level preferences (so, for instance, if I want a cookie, but I don’t want to want a cookie, you should not give me a cookie).

My dissatisfaction with preference utilitarianism is that it fails to answer the question “why should one prefer one thing over another thing?”

Most preference utilitarians tend to treat preferences– at least at a certain meta level– as unmalleable. This seems utterly contrary to my own experience, where preferences are terribly malleable. Operant conditioning is successful. I can get someone to like a song by cuddling with them while we listen to it. The media regularly convinces people to change everything from their fashion sense to their values. Advertising exists.

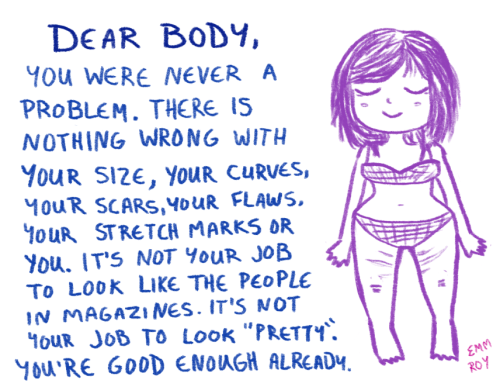

Many preference utilitarians say that it is morally licit to change people’s object-level preferences to match their meta-level preferences: for instance, if someone wants a cookie but is afraid of gaining weight, you can create a pleasant environment for them to eat non-cookie food in, so they associate non-cookie food with good things. On the other hand, the entire moral system has no answer about changing people’s meta-level preferences: for instance, if someone wants a cookie but is afraid of gaining weight, you can associate not being afraid of gaining weight with good things. This is basically a lot of what the body positivity movement is doing: while some of it is “your terminal goals of being sexually attractive or healthy will not necessarily be met by losing weight!”, a lot of it is pure operant conditioning:

Furthermore, while preference utilitarianism and hedonic utilitarianism end up giving the same answers to most questions, there are some questions where I think preference utilitarianism gives a wrong answer. First, imagine someone who is horny and desires to masturbate, but who believes that masturbation is morally wrong because it’s a misuse of the genitals and so wants to not want to be horny. The preference utilitarian answer in this case is to remove their libido or otherwise make their situation easier to manage. My answer is to attempt to convince them of a less evil moral system.

Similarly, think about people who are instinctively disgusted by the idea of gay people to the point that they don’t even want to look at us holding hands. A preference utilitarian might think that gay people and the disgusted people should live in separate communities where we never have to interact with each other. I personally want the latter group of people to stop having that preference, because it is a horrible preference.

So I end up being driven towards hedonic utilitarianism, in the sense that I want my utilitarianism to maximize happiness and minimize suffering.

A lot of hedonic utilitarians and critics of hedonic utilitarians end up thinking about maximizing happiness and minimizing suffering in a very simplistic way: they think about increasing the number of good brain sensations and decreasing the number of bad brain sensations. However, I think that’s not true.

An orgasm is probably the most intense pleasurable sensation a person can experience in the absence of drugs. However, a life of continual masturbation would not be described by most people as “happy”, much less “the happiest possible life.” They would instead be most likely to choose words like “sad” and “empty” and “unfulfilled”. So if one wants to maximize happiness, clearly one is maximizing something other than raw good brain sensations.

I tend to use the word “eudaimonia” for the thing I’m maximizing in order to prevent confusion on the part of the truly appalling number of utilitarians who think the maximally happy life is continual masturbation.

So what is eudaimonia? I don’t have a totally satisfying answer, but I have some considerations.

Eudaimonia is different for different beings. The eudaimonia of a cat is to take lots of naps, eat tuna, and hunt lizards. This is not the eudaimonia of the average human. Humans also seem capable of different kinds of eudaimonia: for one human, the ideally eudaimoniac life might consist of quiet study and long walks; for another, it might consist of raising a child; for a third, it might involve exploring mysticism and altered states of consciousness; for a fourth, it might be satisfying manual labor and participating in their local community. (For that matter, it might be all four at different times! –although that would be a fifth type.)

I think one fundamental aspect of eudaimonia is arete, often translated as “virtue” but better translated as “excellence” or “becoming one’s best self”. I think it’s a mistake, however– possibly a product of the typical mind fallacy– to assume that arete is the same thing for all people. A scholar might say “clearly, the highest arete is studying philosophy” and a mystic might say “no, it is connection with the Divine” and a blue-collar person might say “no, it’s the satisfaction of doing a good day’s work and taking care of your family.”

(Yes, I seem to have accidentally reinvented teleology.)

I have an unclear sense of what eudaimonia means in the general case, but I have a very specific idea of my own eudaimonia. My eudaimonia involves writing regularly, physical fitness, intellectually challenging work, not having any goddamn breasts anymore, and being able to bounce when I’m happy without anyone yelling at me. It does not involve lying in my bed feeling sad all day or dissociating while my System 1 optimizes my social interactions for people not hurting me. It’s the lift I feel in my heart when I think “I’m going to become a saint.”

Given the diversity of possible eudaimonias and the vagueness of my idea, I think we ought to be very autonomy-respecting. In general, we should assume that people have the right idea about their own eudaimonia, since they have access to their own feelings, while other people do not. It is quite common for, say, the scholar to look at the blue-collar person and say “gosh! You are doing such a terrible job of reaching eudaimonia, which is of course found in studying philosophy”, when in reality the blue-collar person is pursuing her own entirely non-philosophy-related eudaimonia. I also feel like we should compare notes about eudaimonias to figure out common threads: for instance, the experience of struggle to reach a goal, a certain amount of physical pleasure (such as candy or sex), a sense of meaning, or not having to have a long commute.

What are your thoughts on coherent extrapolated volition?

LikeLike

I like the idea. However, my real feeling is summed up by a tweet I just sent:

Coherent Extrapolated Volition: “I forgot things I don’t understand should be labelled ‘magic’ – Yudkowsky 2025”

LikeLiked by 1 person

I… kind of feel that transhumanism and technological progress are on course to wreck a lot of people’s eudamonia?

Like, if you’re a competitive athlete, or even just someone who enjoys pushing themselves to become much more physically capable than other individuals, then a future of cybernetic body enhancements and/or insta-muscle pills, where it’s no longer a sign of great effort to achieve these physical feats is not an improvement.

If it turns out that music and visual artistic composition actually are a science as well as an art, and that computers are better at it than we are, or that amateurs with the right software can easily do what one has trained their whole life to produce, this is not an improvement for the old talents.

Similarly, if computers start churning out nontrivial mathematical proofs, this will make people who devoted their lives to pure mathematics less happy, even if it makes computer scientists more happy.

I imagine a lot of the readers of this blog pride themselves on their intelligence; if manage enhancements that raise IQ across the board, but more effectively the lower one started out, so that the range of raw problem-solving power is reduced, this is… not actually great for the formerly rare geniuses.

Mind you, all of these things may well make humanity as a whole better off in a utilitarian calculus, because there are a lot more average people than there are great talents in all of these spheres. But I don’t think we should pretend that the people whose eudaemonia was specifically centered on areas that are going to become un-special aren’t going to pay the cost.

LikeLiked by 4 people

There are knitting machines that can knit faster and better than humans and people still knit things for fun.

I don’t see why we would automate tasks which people actually find enjoyable/fulfilling. A shelf stacking robot makes sense – very few people have intrinsic motivation to stack shelves. A guitar playing robot? Not so much.

LikeLiked by 4 people

Why do people play video games at a difficulty above the lowest level, or refrain from using freely available hacks and cheat codes? Or people who have the opportunity to cheat in multiplayer games (video or otherwise), but don’t?

People actually don’t seem to have much of a problem with self-imposed or participant group-imposed challenges for fun.

LikeLiked by 3 people

Except that in the case of sports or video games there’s an assumption that you got to where you are without enhancement at any point in the past. I guess maybe if it was somehow fully reversible you could get away with it, but once you have robot legs you’re more or less disqualified from football.

LikeLike

I enjoyed this post!

A few things:

1. You still can’t “satisfy the strongest preferences of the most people,” nor can you ensure “the greatest [happiness|eudaimonia] of the greatest number.” Aggregating individual utility functions is a problem that you have to solve if you really want your ethics to have the form “here’s an Ethics Function, maximize it.”

(I don’t want this to be an isolated demand for rigor; I think you can profitably talk about utilitarianism without having solved all of its technical problems. This just bugs me, is all.)

2. I think the point about malleability of preferences is a really big one, and not just for utilitarianism. Social choice theory, for instance, is all about “how do we aggregate preferences?” If you try to apply the theorems of social choice theory without taking into account that preferences are malleable, you’re going to get results that just don’t apply in the real world.

3. On the other hand, trying to use the malleability of preferences to your benefit risks… well, what happens if you try to universalize that? You say that the correct way to deal with “people who are instinctively disgusted by the idea of gay people” is to change their preferences; someone in the ex-gay movement might say that the correct thing to do is to change the preferences of gay people. Ignore the meta-ethical questions this raises; do you really want people like that to have a weapon that strong?

4. Yes, indeed, you do seem to have reinvented teleology and based a moral system on it. You know, there are in fact people who think about morality based on teleology, arete, and eudaimonia. They are not typically called utilitarians. 😉

(…join the dark side!)

LikeLiked by 4 people

“First, imagine someone who is horny and desires to masturbate, but who believes that masturbation is morally wrong because it’s a misuse of the genitals and so wants to not want to be horny. The preference utilitarian answer in this case is to remove their libido or otherwise make their situation easier to manage. My answer is to attempt to convince them of a less evil moral system.”

Is this just when they have a false belief that you can change through talking to them or also when there have a terminal value of masturbation being wrong?

“Humans also seem capable of different kinds of eudaimonia: for one human, the ideally eudaimoniac life might consist of quiet study and long walks; for another, it might consist of raising a child; for a third, it might involve exploring mysticism and altered states of consciousness; for a fourth, it might be satisfying manual labor and participating in their local community”

Let’s say that one persons eudaimonia requires X resources to fulfill. Would it me immoral to non consensually alter their brain so that there new eudaimonia requires only 0.01X resources so we can use the rest on fulfilling other peoples eudaimonia?

Would you value a society where every person has the same eudaimonia less than a society with lots of variation even if the total eudaimonia fulfillment and population was the same?

LikeLike

Why is studying philosophy αρετή where abstaining from masturbation to please God is not?

LikeLiked by 2 people

The same question occurred to me; if someone wants to remove their genitals entirely to avoid lust (like the Skoptsy sect), is that a eudaimonic preference which should be fulfilled (like helping body-dysphoric people who don’t want breasts), or a “horrible”/”evil” preference which should be changed? The end result either way is a person whose object-level preferences agree with their meta-preferences, so how can we tell which one is better? A more extreme case: if wireheading sounds “unfulfilling,” why not self-modify to find it fulfilling; or if it already sounds like a good idea, why not self-modify to prefer more complex fulfillment? Once you allow changing meta-preferences, I’m not even sure if “right” and “wrong” apply any more.

LikeLike

Is this a criticism of philosophy or a defense of ruining your life* for God?

*not that abstaining from masturbation is sufficient to ruin your life by itself, but it typically goes along with a long list of exhausting, pointless rules that, when taken together, will ruin your life

I do agree with the point about “evil” preferences, though. I think some preferences are based on factually incorrect beliefs and people would be pleased to be rid of them, but there’s a lot of preferences I instinctively react to as evil but which are not based on factually incorrect beliefs but merely differences in desire.

LikeLike

I recommend Jo Walton’s The Just City, an sf novel about Athena’s effort to create Plato’s Republic. Things do not go entirely as planned, even having robots doesn’t help as much as one might think. The book is the first of a trilogy.

LikeLiked by 1 person

Ever since the ancient days of first year philosophy, I’ve thought the notion of people having any clear meta-preference hierarchy structure was a marginally helpful oversimplification at best and an incoherent source of deep confusion at worst.

LikeLike

“The preference utilitarian answer in this case is to remove their libido or otherwise make their situation easier to manage. My answer is to attempt to convince them of a less evil moral system.”

I think preference utilitarianism agrees with you here. Humans generally prefer to have true beliefs (in addition to gaining a lot of instrumental value from accurate beliefs). If you’re a human who prefers to set a person on fire because you think they’re a witch, to eschew masturbation because you think you were built by an Aristotelian telos that hates masturbation, or to scorn homosexuals because you think you were built by a deity that hates gay sex, that preference is something the truth can destroy (and, therefore, something a satisfied preference-for-truth can destroy).

“I end up being driven towards hedonic utilitarianism, in the sense that I want my utilitarianism to maximize happiness and minimize suffering.”

The preference utilitarian can agree that we should maximize happiness and minimize suffering, by saying that all agents automatically prefer happiness and automatically disprefer suffering (ceteris paribus). But the preference utilitarian thinks there are other valuable things that need to be traded off against happiness and suffering, whereas the hedonic utilitarian thinks that the *only* value-bearing things are happiness and suffering.

Truth, incidentally, is an example of a virtue I wouldn’t normally classify as compatible with hedonism or eudaimonism. Truth can be instrumentally valuable to an oldschool utilitarian, but it can’t be instrumentally valuable if terminal value supervenes on the states of agents.

LikeLiked by 1 person

“it can’t be instrumentally valuable if terminal value supervenes” should be “it can’t be terminally valuable if terminal value supervenes”

LikeLike

Even if we had been created by a deity, it would not be good to hate things merely because the deity arbitrarily hated them (except possibly as part of an instrumental wrath-avoidance strategy).

LikeLiked by 1 person

I have trouble thinking of a scenario where hedonic utilitarianism that optimizes arete/eudomania differs from preference utilitarianism that places a high value on meta-preferences. If ‘disgusted by gay’ person says that observing a gay couple holding hands interferes with his eudomania, how can we argue against that? In your last paragraph, when you say that we should ‘assume people have the right idea about their own eudomania’, that sounds exactly like preference utilitarianism.

Perhaps I’m being uncharitable, but it sounds like you’re describing the fulfillment of your preferences as being ‘eudomania’, but someone whose preferences include a desire not to masturbate is just operating under an ‘evil moral system’.

LikeLiked by 2 people

Is there a reason you call yourself a hedonic utilitarian instead of a eudaimonic utilitarian? Seems like it would pretty easily convey what you’re trying to maximize.

LikeLike

I’m going to tentatively suggest that the pons asinorum of a moral system is making it so it can’t rationalize arbitrary vices like masturbation. Rationalizing arbitrary vices is what humans are best at already, we should want our morality to take us somewhere better than where we are.

LikeLike

Why is masturbation a vice?

LikeLike

Let me get a little more abstract:

What’s wrong with rationalizing *arbitrary* vices? If they’re arbitrary, you shouldn’t even have to rationalize them, you should instead ditch the broken moral system that compels you to.

If it increases your utility and does not hurt anyone else’s, whatever prevents you from doing it is as objectively evil as anything ever can be.

LikeLike

First, a “preference utilitarian” is an oxymoron, and a “hedonic utilitarian” is a redundancy. What you’re looking for is preference consequentialism vs utilitarianism.

Second, I would argue you’re not a utilitarian at all. Instead it sounds like virtue ethics mixed with consequentialism. Further, I’m not exactly sure how your view differs from just plain virtue ethics in any *practical* sense. People should have a great deal of autonomy to be their best selves, those things are different, being their best selves makes no reference to the consequences of being that self to others (and there’s a paradox if you think they *should* weigh the consequences: what if being your best self didn’t maximize eudamonia? Should you be a worse version of yourself to increase eudamonia globally?).

Finally, you start out by saying people’s desires can be wrong and bad, and yet you think these same people are best positioned to know what their best self is? What if a woman thinks, being raised in a sex-negative culture, that her best self includes her “lying back and thinking of England” during sex?

LikeLike

As a layperson, there seems to have been a subtle and confusing shift in language that I’ve never seen especially explained or remarked upon, but to me is noticeable nonetheless.

Utilitarianism, in the original sense of say Bentham, was once was indeed construed as a specific form consequentialism, where the morally relevant consequences here the hedonic-ish ones (pain/pleasure, sadness/happiness) or something similar, and you added it all all to decide which outcomes were morally correct.

But then over time it feels like:

1) “Something similar” gets relaxed more and more – since the basic framework seems pretty convincing, but “naive” hedonic evaluations of outcomes start to seem to not quite give the right answers all the time, the more you do tricky thought experiments, and/or make empirical discoveries about how things like pleasure actually work……

2) The second aspect, “adding it all up”, became more and more critical to popular conceptions – perhaps because no other prominent ethical philosophies really seem to engage with that notion much, and how else can you have so much ridiculous fun talking about trolleys all the time?

So utilitarianism becomes more like a meta-level description for any ethical theory that calls for a quantity to be maximized. The quantity is labelled “utility”, but its essentially an abstract placeholder.

Or in an arguably even *broader* sense than consequentialism, utilitarianism becomes the meta-ethical premise that “morality is subject to maths.” I.e. that its coherent to talk about agents have utility functions, and/or their existing an a utility function description of the world’s objective morality; and these things can be maximized, or at least, satisficed, in principle; and maybe utility can’t be added, exactly, but at least its a lattice, or poset, or something….. And more concretely, trying to calculate how to more cost effectively give money to charity (say) makes you an unusually virtuous person, not a nasty evil one.

Certainly this feels to the most common usage in the blogosphere etc, and I assume its not completely divorced from the contemporary literature either.

And so preference utilitarianism (which is a super real and common thing, and neither something Ozy invented nor an oxymoron) is the least of it. You can have a utility function that values virtuous agents – which makes you a utilitarian virtue ethicist of sorts – or a rule utilitarian, which is to say, a deontologist 😛 The important thing that makes it “utilitarian” is to at least attempt to talk coherently about things like whether two virtuous people existing is in some sense a morally better universe to than one virtuous person existing, etc.

LikeLiked by 4 people

Do we care about what feels good or about what people attach the label “happy life” to? Because people can attach that label to a life with a lot of unpleasantness, and I don’t see why we should respect that. A somewhat cynical take is that it’s really weird to say that being wireheaded is the best possible life, and people have been taught there’s more to a good life than pleasure, so they say that despite the fact that pleasure is what’s actually good.

LikeLiked by 1 person

I think a lot of Ozy’s disagreement with PU arises from an important moral intuition that often gets overlooked when talking about preference utilitarianism: When determining how well-off someone is, our “morality” preferences don’t “count.”

If I give money to an efficient charity, it doesn’t seem like I’ve made myself better off. It seems like I’ve made myself worse off, and made somebody else better off. Obviously on some level I “preferred” to donate that money. From the perspective of my VNM utility function, I am better off. But it seems like when we talk about how “better off” we are in the vernacular we aren’t talking about our VNM utility functions. We’re talking about the portion of our VNM utility functions that cover the way we want to live our lives. This corresponds pretty well to Ozy’s description of “eudaemonia.”

Now, not all of our “others-interested” preferences work like that. There are some instances where we do feel like we’re better off because we helped others. I think that these are the “warm fuzzies” (as in the classic Less Wrong article “Purchase Fuzzies and Utilons Seperately”). But there are definitely a set of preferences we have that we consider ourselves “worse off” in some sense for having them fulfilled.

The issue of the guy who thinks that masturbation is wrong can be solved with this framework. His belief that masturbation is wrong is a “morality” preference, so it doesn’t count. His desire to masturbate is a person “eudaemonic” preference, so it does count. So if you’re trying to maximize people’s wellbeing, you should let the guy keep masturbating. (Of course, if you are a moral realist, you could just argue that the guy has a false belief about the morality of masturbation. But then we could just modify the problem into the Least Convenient Possible World where the guy is some sort of amoral Masturbation Minimizing UFAI who knows what it wants to do is morally wrong, but doesn’t care).

By contrast, if the guy wanted to reduce his desire to masturbate because he had a meta-level desire to study science, and all that masturbating was getting in the way, a utilitarian would help him reduce his desire to masturbate. That is because wanting to study science is a “eudaemonic” preference, so it does count.

Failure to properly integrate the “moral preferences don’t count” intuition into a moral framework can lead to a variety of errors. One common error is the preference utilitarian who thinks that the bigoted morality of a religious fundamentalist needs to be included in the utility calculations. Another common error this generates is the person who concludes non-egoist values are irrational (because they reason your VNM is identical to your wellbeing, and helping others doesn’t contribute to your wellbeing).

I think Ozy’s “eudaemonic utilitarianism” is a step in the right direction of codifying the “morality doesn’t count as wellbeing” intuition, and does a good job of identifying what preference utilitarianism is supposed to be maximizing than the vague term “preferences,” which can easily be mistaken for ones VNM utility function.

———————————————————————————————-

In some of the other cases I think Ozy has simply chosen bad examples that generate confused moral intuitions. In the case of fat-acceptance, I think most people have a meta-level preference to note be anxious about their body all the time, and that preference is even more-meta than their preference to not gain weight. In cases where it isn’t, try to condition people to have different beauty standards for themselves is morally similar to attempts to condition away gayness. It’s obviously much less horrible, of course, but the difference is one of degree, not kind.

Similarly, the example of the people who are disgusted by gayness is complicated by how big a political issue gay rights are. To make intuitions clearer, I would replace homophobes with arachnophobes, and gay people with people who enjoy carrying tarantulas with them everywhere they go. In this case I think it’s obvious that segregating the arachnophiles into another community would be excessive, but it might not be unreasonable to ask them to at least cover up their tarantulas in public.

LikeLike

I think that’s a terrible analogy, because to make it correct you have to in fact imagine something where:

* the arachnophobes have their own pets, which they are allowed to take everywhere

* the arachnophobes don’t just not want to see spiders, they think liking spiders is morally wrong and instil this value into their spider-loving children so that they grow up feeling like they are bad and wrong for liking spiders

* many of the spider-likers love their arachnophobic friends and still want to spend time with them to the extent that separating these people is a disutility to be accounted for

* many of the spider-likers feel intense disutility about having to hide that they like spiders

* love for your pet is considered a core value and basic human need, to the extent that everyone assumes you have a pet or want one and many things that are culturally considered important milestones in life are things that you are expected to do publicly, with your pet.

I think when you factor all of this in, it becomes clear that the best utility-maximising option (especially accounting for the fact that arachnophobes *will* continue to have spider-liking children) is to cure arachnophobia.

LikeLike

Yes, this is very important. Someone who believes that masturbation is wrong shouldn’t be counted as not wanting to masturbate, they should be counted as having an erroneous moral belief – we’re trying to determine what moral beliefs are correct, not create some meta-moral framework.

LikeLike

Quote is from this comment.

LikeLike

Given the rather large assumption that moral beliefs can be correct.

LikeLiked by 1 person

I just want to nitpick about this:

“An orgasm is probably the most intense pleasurable sensation a person can experience in the absence of drugs.”

To me, orgasm is far from the most intense pleasurable sensation I can experience. The most intense pleasurable sensation I can think of is falling asleep in a safe, comfortable place while physically tired but in no physical or emotional state of distress.

This does not contradict Ozy’s conclusion, though.

LikeLike

“It does not involve […] dissociating while my System 1 optimizes my social interactions for people not hurting me.”

Wow, that sentence really hit home. I didn’t fully realize until now that this is a thing people do, and that I do it as well. Kinda had a nagging suspicion, but lacked the words to express it. Need to meditate on it for a while…

LikeLike

It occurs to us that you have not actually solved anything. You ahave jut mved te problem from ‘prefences’ to ‘eudiamonia’. Consder the case of someone whose eudiamonia is not masturbating and shouing at gays to achieve ‘a conncion with the divine’.

LikeLike

At the very least I find it helpful to have a new word (eudaemonia) that substitutes the old one (the sum of personal preferences) without all the connotations the old one had. I didn’t read all that much on utilitarianism, but damn… ‘preference’ is just really spent, to a point where I can’t seem to bring my head to associate new ideas with that word. But maybe my brain is just weird.

LikeLike

Also, the aggregation of an individual’s preferences enables us to talk about it as a coherent concept of how that individuals life should be, according to their own wishes. That’s taking it from abstract “preferences” to a more individual-centric discussion, I feel. Which is probably old oats, but I didn’t stumble across the idea of a “sum of preferences for an individuals life” yet.

LikeLike

I enjoyed this post! But I find your morality horrifying.

Instead of a life of continual masturbation, let’s go for a purer example and consider a life of continual orgasm. For the sake of the example, let’s pretend the physical sensation of orgasm could feel just as pleasurable after hour 10,000,000 as it does at hour 1. People on the outside might indeed describe that as sad, empty, or unfulfilled. However, those people would be wrong (at least about the sad part; it might accurately be described as empty or unfulfilled). To the person experiencing the continuous orgasm, the experience would likely be described as amazing, perfect, and awesome. And it’s the inside view that counts.

Because here’s the thing: fulfillment, excellence, or any of the other component of eudaimonia don’t matter to people experiencing happiness. Those things only matter to us when we aren’t experiencing pleasurable brain states. When we are experiencing pleasurable brain states, then by definition, we are not significantly bothered by anything, least of all being unfulfilled or failing to be excellent. When people are wireheading, they don’t care that they are not being excellent. They only care that their pleasure centers are being stimulated (maybe – our technology and the state of our neurological science aren’t really at the point where we can understand exactly what’s going on, but I think the concept holds if we imagine perfect future wireheading).

From a utilitarian standpoint, the simplistic view is correct. Maximizing good brain sensations and minimizing bad brain sensations are all that matters. Eudaimonia might be an instrumental way of causing good brain sensations, given that people can’t just feel happy all of the time. But if Omega offers me the opportunity to cause everyone to continuously feel good all of the time, you bet I’m going to do it, and if you don’t, I think your ethics are seriously flawed.

An interesting study was recently conducted on the relationship between a happy life and a meaningful life. “Meaning” was undefined, but it seems to be consistent with your idea of eudaimonia. The conclusion was that they are largely incompatible. A meaningful life is not a happy life, and a happy life is not a meaningful life. Meaning is derived from adversity and struggle. Happiness is derived from having one’s needs met. Given the choice, I don’t see how anyone could argue with a straight face that meaning is better.

My suspicion is that we promote the idea of meaning (or, in your case, eudaimonia) for the same reason that the more poor and marginalized tend to be more religious: we want to feel as though our bad feelings matter. The more downtrodden or unhappy a person is, the more likely they are to feel as though there is virtue or significance to being downtrodden or unhappy, and to make that somehow “better” than all the happy well-off people (“the meek shall inherit the Earth,” etc). It’s a noble lie, but it’s a lie. There is no virtue in misery. There is no meaning in suffering. Good brain sensations are good. Bad brain sensations are bad. To a brain (which is the only part of you that cares), nothing else should, or even could, matter.

LikeLike

I have to disagree with your assessment of that study you linked. They did not find that meaning and happiness were ‘largely incompatible’. They found that there was a pretty strong positive correlation between happiness and meaning, Their findings suggest that it’s possible to have a very happy and meaningful life, but not a life that is both perfectly happy and perfectly meaningful. Given a choice between a life that has 90% of optimal happiness and 90% of optimal meaning, and one that has 100% of optimal happiness and 25% of optimal meaning, it’s not clear to me that I should choose the latter option.

LikeLiked by 1 person

Ok, I think you’re right that I overstated the case a bit. Happiness and meaning were pretty strongly correlated, but the point of the study was to identify the factors which affect happiness and meaning differently, and they found a number of factors which have positive effects on one, but negative effects on the other, so there are definitely areas where we have to make a zero-to-low-sum choice between happiness and meaning.

In your example, one should always choose the life that has 100% of optimal happiness. Although, from a utilitarian perspective, that’s not always right. The utilitarian example would be that if you could choose for *everyone* to have 100% happiness/25% meaning vs. 90% of each, in which case you should choose 100% happiness. If it were possible (it’s not, as the two factors have around .7 correlation), it would be fine to choose 100% optimal happiness/0% optimal meaning, because meaning is irrelevant to optimally happy people. People experiencing pleasurable brain states are unconcerned with whether their lives have meaning.

LikeLike

And I think your morality is horrible. There are indeed tradeoffs between meaning and happiness, but to conclude from this that “we should always sacrifice meaning to promote happiness”….

Well, look at Nozick’s arguments about the experience machine. If you’re wireheading, you’ve given up the opportunity to actually do certain things and be a certain type of person. These are important, terminal values, at least to some people.

I dunno, dude. I’m probably thinking with aesthetics, here, but if you really and genuinely believe that the good is as many people as possible wireheading, the best thing I can recommend is to read Infinite Jest.

LikeLike

augh, s/horrible/horrifying/

LikeLike

Well, I’m an egoist, so I’m sure most utilitarians think my morality is horrible, but even on utilitarian terms, I think we should sacrifice anything in the name of greatest overall happiness (however one goes about measuring that sort of thing). I reject the view that doing things or being things are actual terminal values for anyone. I think that, if those people were wireheading or otherwise experiencing pleasurable mental states, they would not be at all concerned with doing things or being the right kind of person.

The good is not necessarily as many people as possible wireheading, because as long as some people aren’t wireheading, then there are still tradeoffs to make. There is also the complication that wireheading technology is in its infancy, and we don’t know whether we are actually creating pleasure or something else. BUT if wireheading worked perfectly AND everyone in the world could do it while automatic processes kept us alive and reproducing, then that would be unequivocally the best possible scenario on utilitarian grounds. In that situation, nobody’s terminal values would be anything other than to keep experiencing pleasure. The goals of hedonic and preference utilitarianism would be served.

The fact that this conflicts with our moral intuitions is just evidence to me that our moral intuitions aren’t to be trusted.

LikeLike

I am also not any sort of utilitarian, so trying to convince me on utilitarian grounds isn’t going to work.

As to “nah those aren’t real terminal values”… maybe you’re typical-minding just a little bit? Nozick seemed to think they were terminal values. David Foster Wallace seemed to think they were terminal values. Aristotle seemed to think “being a certain way” was, like, the terminal value. I think they’re terminal values. Perhaps they aren’t for you, and you would prefer to plug into a working wireheading machine. Okay. But basing a moral system on your preferences, while ignoring the stated preferences of lots of other people, is a really dangerous idea.

When it comes down to it, my true objection is that I’ve tried being an indeterminate pleasure-blob, and I don’t think my problems with it were that it was insufficiently pleasurable. I’d rather stick with my untrustworthy moral intuitions than turn everyone in the world into, essentially, high-end heroin addicts.

LikeLike

I think that’s probably the correct decision, given the world we live in, where it’s impossible to experience pleasure all of the time. It’s possible that I’m typical-minding, but I think it’s more that maybe I have a conceptual block. I’m having trouble even picturing what fulfillment or satisfaction would mean if not the mental state of feeling fulfilled or satisfied. And if that’s all we’re talking about, then it seems like wireheading is still the best bet – we just need to figure out the right electrical signals to the brain to make people feel fulfilled and satisfied. If that’s not what we’re talking about, then what are talking about?

LikeLike

Have you read this blog post by Nate Soares?

The stamp-collecting robot would prefer one stamp to the maximum level of stamps inside of its internal stamp collector. Similarly, I would prefer (and I believe most people would prefer) a life that seemed more “meaningful” to them to a life that seemed less “meaningful” but had their “meaningfulness” sensation maxed out. Therefore, I propose that meaningfulness is, to a degree, a state of the world, not just a state of one’s internal sensations.

LikeLiked by 1 person

Wfenza,

When you say things like ‘meaning is irrelevant to optimally happy people’ or ‘nobody’s terminal values would be anything other than to keep experiencing pleasure’, all that means to me is that you can change people’s terminal values to ‘nothing but pleasure’. It doesn’t necessarily mean that I should want you to change my terminal values in that way.

LikeLike

“we just need to figure out the right electrical signals to the brain to make people feel fulfilled and satisfied. If that’s not what we’re talking about, then what are talking about?”

Well, okay, this is where Nozick’s third objection to the experience machine comes in:

A more concrete example: from the little I understand of Buddhism, nirvana is a state very much like plugging into a [happiness|fulfillment|eudaimonia] machine. But I don’t think a Tibetan lama would endorse plugging into such a machine, even an idealized one in principle.

Of course, one may argue that there is no “deeper reality” to have contact with. Fine. But at this point we’re arguing metaphysics, which is spectacularly unproductive even compared to arguing ethics. And, well, I think one should be skeptical about such things. As long as I’m thinking with aesthetics, let me quote Shakespeare:

@Ozy – I had not read that before! Thank you for that.

I agree that the stamp-collecting robot is a good metaphor for use in the current discussion. In the story, the stamp-collecting robot is programmed to choose the stamp over having its internal counter increased. So if asked, the robot will take the stamp, its internal counter be damned.

However, what if we fool the robot? I blindfold the robot and tell it that every time he high-fives me, I’ll give him a stamp. But actually, what I do is increase its counter. The robot will keep high-fiving me, because all of the robot’s experience of stamp collecting is filtered through that counter. What happens on the other side of the counter (i.e. in reality) actually is irrelevant to the robot. The robot just doesn’t know that, because it’s programmed to believe otherwise. And because I don’t really know anything about programming or circuitry, I’ll stop with the counter, but someone with more knowledge than me could easily find the part of the robot’s hardware or software that is pleased by the increase of the counter and trigger that directly, without need of the counter. The robot would be pleased, because its programmed purpose would be fulfilled, even though outside reality wasn’t being changed at all.

And we can do the same thing to ourselves. Fulfilling our preferences just means activating the part of our brain that is activated when our preferences are fulfilled. If we could do that directly (for everyone on Earth, and continue to live and reproduce), then there would be no need (from the inside view) to actually fulfill our preferences.

@Shemtealeaf – I would argue that your terminal value already is to maximize pleasure. If you accept the idea that experiencing pleasure all of the time will make you not care about anything else, it seems like you agree? Picture, by contrast, an attempt to make someone not care at all about pleasure by satisfying some other value. Is there anything you could provide a person with that would make them indifferent to the fact that they were experiencing horrendous suffering? If not, isn’t that strong evidence that pleasure is, at the bottom, all that really matters to us?

@Haishan – thank you so much for your contributions to this conversation. I really enjoy debating about this kind of thing, and you raise interesting and well-articulated points. I welcome any further comments that you would like to make tomorrow. As by way of counterpoint, all I will offer is my own Hamlet quote:

LikeLike

Well this just well and truly fucked my mind.

LikeLike

Aren’t wireheading and an experience machine two different things, in that one provides “only” pleasure and the other makes any kind of experience you can imagine feel real? And even if you don’t actually do anything in the experience machine, can’t you say that you are the kind of person who’s willing to do the things you decide to do in it? And doesn’t the experience machine even give you some choices not available in real life, like the choice to show you’re the kind of person who dares to fly on a broomstick?

LikeLike

I just jerked off for science and I continued endorsing my morality the entire time.

LikeLiked by 3 people

At the moment of orgasm, did it bother you that you had other unmet goals?

LikeLike

At the moment of orgasm I can’t think. I also can’t think about my unmet goals when I’m asleep, and yet being asleep constantly is typically considered to be “a coma”, not “an ideally satisfying existence”.

LikeLike

I don’t think being asleep is an ideally satisfying experience. Outside of REM sleep, I mostly experience sleep as being completely blank. That is better than being in pain, but not better than experiencing pleasure.

At the moment of orgasm, I’m not feeling blank. I’m feeling incredibly good. Feeling incredibly good + not feeling bad in any other way (i.e. not worrying about unmet goals) = maximal goodness.

LikeLiked by 1 person

@wfenza

>At the moment of orgasm, did it bother you that you had other unmet goals?

So what if it does? Why should someone’s desires at the moment of orgasm be more important than their desires at other times?

And you seem to be confusing “not thinking about a desire at that moment” with “not having a desire.” That is wrong. Our goals are always in our brains, at all times. If we’re not thinking about them at the moment they haven’t vanished, they’re just stored somewhere (probably the prefrontal cortex). Saying I don’t have goals because I’m not thinking about them at the moment is like saying my computer doesn’t have “Crazy Taxi” installed on it because I’m not running that program at the moment.

Even if my brain was stimulated to maximal pleasure, my goals would still be stored on it somewhere. So it would still matter that I wasn’t fulfilling them.

LikeLike

I feel like this is going to end in scores of philosophers masturbating to see what their preferences are during orgasm. I have yet to decide whether this is a good or bad thing.

LikeLiked by 4 people

I don’t really understand this argument. To use your hypothetical, if you never try to play Crazy Taxi, then it doesn’t matter whether Crazy Taxi is installed. Similarly, if your “desire” never enters your conscious or subconscious mind, then it’s irrelevant whether it exists somewhere in your brain architecture. Even if your goals were stored somewhere in your brain, if they are never accessed, then it doesn’t really matter.

The reason why goals at the moment of orgasm are the most important is because that’s the only time that a person can ever have all of their desires met. If you are at less than 100% optimal pleasurable brain state, then you still have unmet desires (namely – to experience more pleasure). If you are at 100% optimal pleasurable brain state, you have no unmet desires. You have everything you want. If you are at 100% optimal eudaimonia, but only 50% optimal pleasure, you still have unmet desires.

LikeLike

@Lambert – if you can’t agree that scores of philosophers masturbating to see what their preferences are during orgasm is an unequivocally good outcome, I have to question your ethics.

LikeLike

Is it any worse than intellectual masturbation?

LikeLike

@wfenza

>Even if your goals were stored somewhere in your brain, if they are never accessed, then it doesn’t really matter.

Why not? If an AI built a sleeping adult person with a fully developed personality from scratch, it seems like it would be wrong to kill them. That is because they have preferences stored in their brain that it would be wrong to violate. The fact that the person hasn’t woken up, and therefore hasn’t consciously experienced their preferences yet doesn’t matter. All that matters is that the preferences exist.

If I’m not thinking about my car at the moment it doesn’t suddenly become okay to steal it. If I’m not thinking about my reputation at the moment it suddenly doesn’t become okay to slander me. All preferences matter, not just the ones your consciousness happens to be running at the time.

>The reason why goals at the moment of orgasm are the most important is because that’s the only time that a person can ever have all of their desires met.

Again, no, it isn’t. A person’s desires continue to exist inside their brain somewhere. And, as I pointed out elsewhere, they also still exist in the past.

>If you are at 100% optimal eudaimonia, but only 50% optimal pleasure, you still have unmet desires.

Let me expand on an example I pointed out elsewhere: Imagine we could create a superadvanced brain that could experience much more intense happiness than a regular one. It can also have eudaemonic preferences active in its conscious mind, even at a state of maximal pleasure. This brain feels happiness that is twice as strong as the happiness in a regular brain at maximum happiness, but it also has some unsatisfied preferences.

According to you, this brain is worse off than a more primitive brain at maximum happiness, because it also has some unsatisfied preferences. But this seems crazy, it seems obvious that it’s better off since it is twice as happy, and it can act to satisfy its eudaemonic preferences. This seems to me to indicate your framework is flawed.

LikeLike

Fulfillment still matters to me when I’m experiencing happiness. Even when I am at my most ecstatic there is still a part of me that assesses how fulfilled and excellent I am. Sometimes I will even consciously choose to cut a happy brain-state short in order to pursue fulfillment (And it’s not that I’m being prudent, I sometimes choose to do something fulfilling that has no long term benefit instead of something pleasurable that has no long term benefit).

So I think I can submit my own subjective experiences as evidence that people still care about fulfillment, even when they’re happy. And I somehow doubt that I am unique.

That being said, I would probably also take Omega’s offer to make everyone feel continuously good all the time, as long as those feelings didn’t incapacitate them so they couldn’t also do fulfilling things at the same time. My objections to wireheading stem from the fear that it would turn people into passive zombies who just sit around feeling pleasure. If there was some way to fix it so we could be maximally happy, but still be doing fulfilling eudaemonic stuff at the same time, I’d have no problem with that.

LikeLike

It still matters to me too, and I have often cut pleasurable experiences short for a variety of reasons. We don’t live in a world where 100% optimal happiness is possible, so there will always be tradeoffs for us mere humans.

However, what I’m advocating is viewing eudaimonia as a merely instrumental step which is one path to happiness, but viewing happiness (good brain feelings, or whatever you want to call it) as the only terminal value. If you can be happy without eudaimonia, do that! If you need eudaimonia to be happy over the long term, do that! But don’t fool yourself into thinking that eudaimonia has value independent from happiness. If eudaimonia makes you miserable, there is no reason to keep chasing it.

LikeLike

> But don’t fool yourself into thinking that eudaimonia has value independent from happiness.

I’m not fooling myself. It actually does have value independent from happiness.

>If eudaimonia makes you miserable, there is no reason to keep chasing it.

Yes there is. The reason is that I want to. It’s all the reason I need.

Also, you are basically arguing that if I was maximally happy, I wouldn’t want eudaemonia. I’ve argued that that is false, and I stand by that. But even if it was true, that wouldn’t matter. Why should what my maximally happy self prefers trump what my not maximally-happy self prefers? My desire for eudaemonia is important, the fact that I don’t have during certain mind-states doesn’t change that.

If anything I’d say it might be the opposite. If my maximally happy self is so happy he doesn’t have any preferences, than it wouldn’t be violating any of his preferences to stop the happiness and replace it with eudaemonia.

LikeLike

Well, whether you would desire eudaimonia if you were maximally happy is an empirical question, so it seems silly to debate it on conceptual grounds. I know that if I were maximally happy, I would not care about anything else. You might be different. So instead I will focus on the conceptual question – assuming that if you were maximally happy, you would not want eudaimonia, what then?

I suspect that the only preference your maximally happy self would have is to continue being happy. I strongly suspect that if you took away all of his happiness so he was miserable, then gave him maximal eudaemonia, he would still be miserable and he would prefer not to be miserable anymore. There is only one self whose preferences are 100% satisfied – your maximally happy self. Every other version of yourself still has unsatisfied preferences. Therefore, from the standpoint of hedonic OR preference utilitarianism, the most moral outcome is for everyone to be maximally happy.

LikeLike

>There is only one self whose preferences are 100% satisfied – your maximally happy self.

Wrong. My maximally happy self has less preferences satisfied than many of my other selves. That is because I have all the same preferences that I do when I am not maximally happy. Just because I am crippled by happiness so that I can’t focus on them doesn’t mean they’re not there.

What if we invented a new, more efficient type of brain that could focus on its preferences and be maximally happy at the same time? Would you say that its preferences are less satisfied than the inferior model, even though it is just as happy? That doesn’t seem to make sense. It makes much more sense to consider preferences as something that always exist as long as they are stored in your brain, and the idea that they somehow cease existing if you are not thinking about them is a fallacy generated by sloppy thinking.

And that’s not even getting into the fact that even if my preferences totally ceased to exist, they’d probably still matter. Why should you disrespect a eudaemonic preference just because it exists only in the past, and not the present?

The eudaemonic preferences of people in the past matter too. That’s why it’s wrong to slander someone’s reputation, even when they are dead, as long as a preference exists at any time in history it matters. Currently the preferences of my past and present selves are in harmony, so it makes sense to think of us as one continuous person. But if I was wireheaded so that they weren’t in harmony anymore, my past selve’s preferences to not be wireheaded would probably outweigh my maximally happy self’s preferences to be wireheaded. So the best thing to do would be to return me to normal.

>I strongly suspect that if you took away all of his happiness so he was miserable, then gave him maximal eudaemonia, he would still be miserable and he would prefer not to be miserable anymore.

If you took away someone’s happiness they wouldn’t be miserable. Misery is an emotion of its own. Someone without happiness would just feel blah. But in any case, I never said happiness was of zero value. I don’t want 100% eudaemonia and zero happiness, any more than I want 0% eudaemonia and 100% happiness.

LikeLike

Let’s call preferences that are stored somewhere but presently inactive “latent preferences” and other preferences “active preferences.” My view is that latent preferences are irrelevant. Or rather, latent preferences (or past preferences) are relevant only to the extent that we expect them to become active preferences in the future. If they never become active preferences, they don’t matter. The reason I shouldn’t steal your car or slander you is because I’m pretty confident that having a car and a decent reputation will become active preferences of yours in the near future, even if they are latent at the moment. It’s not that your latent preference cease existing. It’s just that they have no significance unless at some point they become active preferences again.

I don’t understand why a past preference would have any significance at all. I have plenty of past preferences which are diametrically opposed to my present preferences. I would be pretty cheesed off if people decided that my past preferences were just as important as my current ones. Likewise, why should we care what dead people wanted? They are dead. Nothing that happens from now on can affect them. The only reason it makes sense to respect the dead is that disrespecting the dead tends to make *living* people feel bad.

Here is, perhaps, our conceptual disagreement: I don’t know what it could mean to have a preferences that is not linked to some kind of good feeling. When I’m talking about preferences, I’m only talking about the thing that, upon accomplishing them, cause us pleasure. I think that maybe you’re talking about things that, upon reflection, we endorse. Reflective endorsement is one way to measure preferences, but I don’t think it’s a useful one. So instead of preferences, I’ll use the word “wants” to indicate things that make us feel good when satisfied and bad when unsatisfied. I want ice cream. I want equality of the sexes. I want our military budget cut. Those are all things that, if they were satisfied, would make me feel good. Wants are also fleeting; they can change at any time. I’ll continue to use “preferences” to refer to things that we reflectively endorse. For instance, I want to leave my job and lay around all day, but that would get me fired, so it’s not a thing that I reflectively endorse. I want to lay around all day, but I do not prefer it. Preferences can also change, but they tend not to change moment-to-moment like wants do.

To me, wants are ultimately the only important thing. Respecting people’s preferences is a good idea, but it is instrumental. We should respect each other preferences because we lack the information, skill, and power to provide everyone with everything that they want. So we recognize each other’s autonomy to decide the best way to go about satisfying our wants and to come up with our own preferences. However, I think the goal of any system of ethics ought to be to maximize the satisfaction of our wants. I’m an egoist, so I see the satisfaction of my own wants as my primary motivation, but the goal could easily be expanded by a utilitarian to be the greatest overall satisfaction of wants. If everyone in the world has all of their wants satisfied (so long as automatic processes kept them alive and reproducing), then I can’t see any reasonable argument for how that would be a bad thing, even if it caused them to abandon all previously-held preferences and wants.

This is entirely dependent on how our brains work – namely, that a person experiencing maximal happiness is incapable of having opposing preferences. A person whose wants are all satisfied seems to automatically have all of their preferences satisfied as well. Admittedly, I’m not completely sure on this point, but I’m looking forward to the masturbating scientists report on the topic. Were I to be implanted with a brain that could experience maximal happiness but also reflectively endorse other preferences… then I don’t know. I guess then it would make sense to consider eudaimonia separately from happiness? I’m somewhat skeptical that it’s actually possible to completely separate wants and preferences such that completely satisfying someone’s wants fails to satisfy all of their preferences, but IF that is possible, then I suppose preferences would matter independent of happiness.

LikeLike

>I have plenty of past preferences which are diametrically opposed to my present preferences.

I think most, if not all of the conflicts between your past and present preferences can be resolved by going meta. For example, if someone had a preference to be an astronaut when they were little, and now has a preference to be an engineer, both of those preferences are expressions of a meta-preference to “have a good job.” So they aren’t really in conflict.

>Likewise, why should we care what dead people wanted? They are dead. Nothing that happens from now on can affect them.

This is mostly true, but there are some instances where it seems to me we can still affect dead people. For instance, if someone clear’s the name of a dead person who was accused of a crime, restoring that person’s name in the eyes of their friends and family, I feel like that dead person is better off than they were before.

Similarly, lots of people take action to protect their legacy, so that they will be positively remembered after they die. They certainly seem to think that they are benefiting themselves.

>I don’t know what it could mean to have a preferences that is not linked to some kind of good feeling. When I’m talking about preferences, I’m only talking about the thing that, upon accomplishing them, cause us pleasure.

There are lots of instances where people prefer to accomplish things, even though it would cause them pain. For instance, many people who suspect their partners are unfaithful investigate them, even though discovering the truth will cause them pain.

I personally try to seek out painful truths, even though they hurt, because I tend to value knowing the truth more than I value not feeling pain (within limits of course, I wouldn’t submit to a year of torture to find out what the capital of Ecuador was or something like that). Obtaining knowledge sometimes causes me pain, but I do it anyway, because I want to.

>I think that maybe you’re talking about things that, upon reflection, we endorse.

I think that when you reflect what you are doing is “accessing” the many preferences stored in your brain and comparing them. So to me reflectively endorsed preferences are much more important and authentic, because they better reflect your complete values.

The fact that we can’t hold all our preferences in our conscious mind at the same time is a design flaw. You seem to be treating this design flaw as a virtue, by arguing that preferences only matter when we’re holding them in our conscious mind, and cease mattering when they go back into storage.

> I’m somewhat skeptical that it’s actually possible to completely separate wants and preferences such that completely satisfying someone’s wants fails to satisfy all of their preferences, but IF that is possible, then I suppose preferences would matter independent of happiness.

There’s some good articles on Less Wrong about this topic. The two I found most informative where “The Neuroscience of Pleasure,” “Not For the Sake of Pleasure Alone,” and “A Crash Course in the Neuroscience of Human Desire,” by lukeprog (I believe that is Luke Muehlhauser, but am not certain). Basically he goes over the relevant neuroscience and concludes that it totally is possible to separate desires that give you pleasure from other desires, and pleasure from preferences in general. The idea that they are the same thing seems to be an illusion created by the fact that “desire” and “pleasure” signals are sent along the same nervous pathways, and often get comingled.

LikeLike

Ok, I think those LW articles help a lot to refine my position! I think the most salient point to the discussion is that there are three components to our reward system:

You are, of course, correct that a person can desire something that does not bring them hedonic pleasure, which is the difference between wanting and liking noted above. However, my position is simply that from an ethical standpoint, wanting and liking are both brain functions linked to reward. So the best possible reality is one where everyone has the parts of their brains activated which govern wanting AND liking (and learning). In other words, in an ideal universe, people would want the things they like, and they would receive the things they want.

The ethical insignificance of what we want is not so counter to our ethical intuitions (though, as I’ve said, I don’t trust those anyway). Consider the anti-wirehead:

I think it’s equally clear that if we had the power to force the anti-wirehead to stop wanting torture, it would be monstrous of us not to exercise that power. When someone desires things that unequivocally harm them, the best solution is to stop them from wanting those things. It’s also implicit in the concept of coherent extrapolated volition that what we actually want at the moment isn’t all that significant ethically, and that any ideal system will satisfy only the desires that people ought to have.

I don’t really think this changes much of what I was saying above. Satisfying a desire isn’t the same thing as pleasure, but it still falls under the umbrella of what I would call “feeling good.” A person who is denied what they want feels distress. In other words, they feel bad. So making people feel good just has to incorporate pleasure, desire, and any learned associations. But the goal is the same: feeling good and not feeling bad. Satisfying a desire isn’t the same kind of feeling good as hedonic pleasure, but it’s still feeling good.

I’m still unclear about why past preferences matter. I’m thinking less about preferences to have a good job and more that, in times of anger, I have wanted other people to feel pain or even to die. I no longer have those desires and disavow them completely. Is it really morally significant that, at one point, I wanted those things? I just disagree completely with your example about dead people. They don’t care about their reputations. The only people who care about dead people’s reputations are living people (which includes us, before we die, which is why people try to protect their legacies).

I stand by this statement from my original comment:

“From a utilitarian standpoint, the simplistic view is correct. Maximizing good brain sensations and minimizing bad brain sensations are all that matters. Eudaimonia might be an instrumental way of causing good brain sensations, given that people can’t just feel happy all of the time. But if Omega offers me the opportunity to cause everyone to continuously feel good all of the time, you bet I’m going to do it, and if you don’t, I think your ethics are seriously flawed.”

“Good brain sensations” just include things we like AND things we want (and learned associations). Eudaimonia might be one way of making our brains feel good (or less bad), but it’s the effect on our brains that matter, not actual reality.

LikeLike

Utilitarianism of all stripes is alien to my felt sense of morality — in many cases, the moral thing to do is not what would make me happiest, or even what would increase the sum of happiness, however defined. For instance, taking care of an elderly parent; it’s a miserable experience (trust me on that one), and there are many more constructive things one could do with one’s time than making a senile, barely-conscious, dying person slightly more comfortable, but no utilitarian argument could convince me it wasn’t my responsibility.

I’m deeply suspicious of any attempt to systematize or justify morality, which I regard as one of those quintessentially human and irrational things that can only be experienced, not explained. Certainly the experience can be clarified, and our appreciation of it improved; training in music theory can help us appreciate Mozart, but it can’t substitute for listening, nor produce a scientific ranking of composers. Failing to keep that in mind leads to zealotry. Musical zealots are merely annoying, but moral ones are dangerous.

LikeLike

If morality is irrational, then why should one be moral?

LikeLiked by 1 person

That’s a really strange question to an error theorist, and it’s one I find impossible to answer without resorting to analogy. I’ll at least keep the same one, and respond: Why should one listen to music?

LikeLiked by 1 person

The categorical statements “one should listen to music” and “one shouldn’t listen to music” are both false. A hypothetical statement like “one should listen to music if one enjoys it” is true, because given a certain set of preferences and conditions, it is rational to listen to music in some of them.

But that isn’t enough for morality, because it has to be binding on other people, so it (or its interpersonal part) can’t solely be about your own preferences.

LikeLiked by 1 person

The statement “one should not murder” is not different in kind from the statement “one should listen to music”. Both express the speaker’s preference for the behavior of his audience. Of course, the former preference is likely to be much more emphatic, even backed by coercion, but that’s a difference of degree.

Morality, in itself, not only doesn’t “have to be” binding on other people, it literally cannot be. A moral claim only constrains the behavior of its audience to the extent that they can be convinced (or coerced) to adopt it themselves. If you can demonstrate otherwise, you’ve solved the is-ought problem and history awaits.

LikeLike

Moral statements are about more than the speaker’s preference – “I don’t want you to murder” and “You shouldn’t murder” aren’t equivalent statements. Part of the difference is that they feel qualitatively different, but perhaps more important is that moral statements imply that the listener has sufficient reason to act morally. For example, “I want you to give me ice cream” is merely a statement about my preferences, but “You ought to give me ice cream” is about your reasons for action (of which your preferences may be a part, possibly the sole part). That’s why a true moral statement is binding – because it means that the listener has sufficient reason to act in a particular way, and would be irrational to act otherwise.

(This also means that it’s possible for someone to make moral statements contrary to their desires – for example, I may want you to give me ice cream, but nevertheless you aren’t obligated to give me ice cream.)

LikeLike

“You ought to give me ice cream” is only different from “I want you to give me ice cream” insofar as it makes an implicit appeal to a shared value as the reason for the gift. If you’re talking to a friend who knows you’re hungry, the appeal may work. If you’re talking to the owner of an ice-cream shop, or Darth Vader, or the universe in general, it probably won’t.

Folk morality says that the shared value is independent of the minds of the speaker and listener. Error theory says that it obviously isn’t, if you think about what is actually happening. The speaker’s and listener’s values may be objectively congruent or incongruent, but it is nonsensical to describe either as objectively “right” or “wrong”, any more than preferring Mozart to Bach is right or wrong.

LikeLike

Saying “You ought to give me ice cream” isn’t necessarily an appeal to a shared value (though it probably is). It could be that I don’t want ice cream and yet you still ought to give it to me. It’s most similar to the standard analysis of a one-shot PD: if I am Prisoner A, I want B to cooperate, but I admit that he ought to defect. The benefits to him of him defecting are only his value, not a shared value, and he ought to do it particularly because it’s his value.

I’m not arguing for folk morality, just for morality. Morality depends on value, which is agent-relative, but though we can’t say whether a value is correct on its own, we can say whether a system of preferences is inconsistent, whether a particular action follows from a set of preferences, etc, and those are all objective. Admittedly, folk morality says that the rightness of rules/actions is independent of an agent’s evaluative standpoint, and I agree that’s mistaken, but rejecting that doesn’t by itself reject morality altogether. For example, if it is better for you to agree not to kill me if I make a reciprocal agreement, we can say that it grounds a moral right to not be murdered, though nothing independent of our values ever enters the picture.

LikeLike

A preference utilitarian would probably hope that a disgusted-by-gay-people person has a preference for arete, such that they don’t want to hate people for no reason, which you could use to get them to agree to operant conditioning to undo their disgust.

If someone really, truly, consistently prefers not to look at gay people… Well, then they’re in fundamental conflict with us and I don’t think the answer should be easy. You might forcibly change their preferences, but I’d rather we not pretend we’ve done then a favor in that case.

LikeLike

Err, how much time have you spent around homophobes? A consistent, endorsed belief that gay people are disgusting seems to be the most common true rejection of homosexuality IME.

LikeLike

There has to be a little more to their rejection than that. I personally find two men having sex to be incredibly gross, and don’t like even thinking about it. However, I also know that my feeling of disgust does not give me a right to stop people who love each other from being together and expressing their love. To me that is absurd as saying that my disgust at the idea of eating cooked broccoli somehow gives me a right to stop other people from eating it.

There has to be something else that turns “this thing is gross” into “we should hurt people who do this thing.” Something other people have that I don’t have.

LikeLike

You may be disgusted by cooked broccoli, but presumably you are not morally disgusted by it. The impulse to punish arises from moral disgust, a distinct emotion from mere aversion. (Distinct, but probably related, given the cultural ubiquity of morality being described by cleansing metaphors, disease metaphors, etc.)

Point being, the way homophobes feel about gay people is not the same as the way you feel about broccoli. It’s probably closer to the way you feel about pedophiles.

LikeLike

Depends on your threshold for “homophobe”. I’ve never really spoken to the kind of people who think homosexuality causes natural disasters, but in terms of the kind of people who just think a gay couple is something gross they shouldn’t have to look at…I basically watched my entire generation go through exactly the process I described.

LikeLike

Huh.

So, preference utilitarianism has been what I thought was the answer to ‘closest out of the common utilitarianisms to my actual moral system’ but the ‘preference utilitarianism’ I had in mind gives *completely* different answers/takes on the examples here then the presented ones. So if this is indeed an accurate portrayal of actual preference utilitarianism, I clearly need to switch references.

(The way I think about it wasn’t like ‘you want to give people what they want a lot’, but that what you’re trying to maximize is something like wellbeing, but people get to make the call on what serves their wellbeing. (So, that’s the preference part. But, the formulation is important because if someone says ‘I’m awful, lock me in a cellar to suffer’, then that’s *not* actually a call that that’s what serves their wellbeing, since along with us probably figuring that doesn’t serve their wellbeing, *they’re* not saying that serves their wellbeing either.)

(If someone isn’t making the call that something serves their wellbeing, but that’s the option they’re determining to take anyway, we still don’t get to violate/to force our choice on them, but at that point we are allowed to ‘be sad’ about (where ‘be sad’ here means ‘get a lesser value on that part of our function’, rather than the actual emotion – the emotion is our right to have whenever in general.))

(Sidenote, what are the definition-boundaries of ‘utilitarianism’? Is it any kind of ‘do math with this’, or does it have to be ‘this specific form of math’. Because I figured out a way to encode my ‘don’t violate people, including over their wellbeing’ in math terms, but I’m not sure what to then call the resulting system.))

I figured I was closer to preference than hedonistic because preferences are more important to me than pleasure. So, say we have a person, and there is something that is 100% certain to increase their pleasure, but they’re saying no to it (in this case, not because they hate themselves, entirely ego-syntonically, etc.) My morality says ‘absolutely abide by their no’ (which is important, and I find the alternative rather, well, rapey) while it feels like hedonistic utilitarianism wouldn’t.

LikeLike

Naturalist, metaethical nihilist, and non-utilitarian consequentialist here.

I really like this post, but I think there’s a way out of a corner Ozy seems to think they painted themselves into. I don’t think we have to posit teleology (even as a useful fiction) in order to make sense of the sort of ethics Ozymandias is trying to advance.

We don’t need to think that people have intrinsic “ends” for which they’re meant for. We just have to say that for any individual, there is a possible (physical, mental, social, political, economic) state in which she is most able to satisfy her own preferences and the preferences of the people she cares about; and that if we care about well-being, we should help her achieve this state. Nothing spooky needs be invoked to talk like this. We don’t have to say that a person “naturally tend towards” her ideal state, or that she’s somehow committing an “intrinsic evil” if she doesn’t conform to it. Even as error theorists, we can say we want people to resemble their Ideal of Flourishing as far as possible, and that anyone else who subscribes to the hypothetical imperative “Maximize Well-Being!” should want that, too.

I don’t know how much sense I’m making. But the reference to teleology was probably flippant, so my comment may be much ado about nothing.

LikeLike

Wait, so who actually gets to define what someone’s eudiaemonia is? What’s to stop Ozy’s eudaemonia from secretly being chastity or not blogging or something? All examples given have peoples’ eudeamoniae as being reflected either in preferences or pleasure.

LikeLike